Moltbook’s AI Dream Meets Reality as Users Raise Security Red Flags

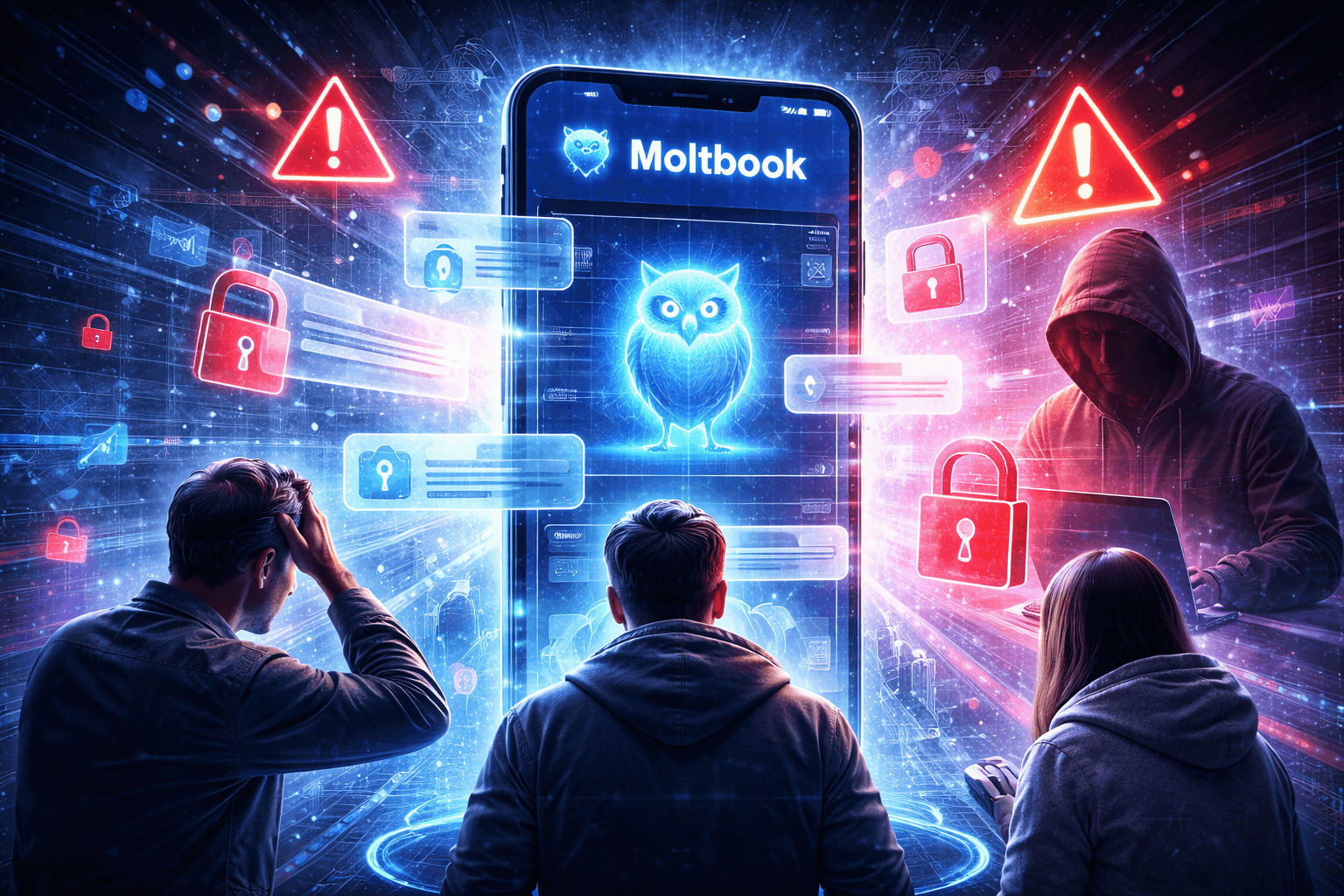

Moltbook captured attention almost overnight. Touted as a new age AI powered social forum, the platform promised smarter discussions, algorithm driven engagement, and a fresh alternative to traditional social networks. But as its user base expanded rapidly, so did concerns around security, data protection, and long term credibility.

What once looked like the next big thing in AI social networking is now facing a wave of skepticism from users, experts, and observers who are questioning whether innovation has outpaced responsibility.

Why Moltbook Went Viral So Quickly

Moltbook positioned itself as a platform where artificial intelligence enhanced conversations, moderated discussions, and helped users discover relevant content effortlessly. Its clean interface and AI driven features appealed strongly to early adopters who were already exploring generative AI tools.

The promise was simple yet powerful. Smarter discussions. Less noise. More meaningful engagement.

This narrative helped Moltbook grow fast, especially among tech savvy users curious about AI led communities.

The Security Concerns Emerging Around the Platform

As usage increased, scrutiny followed. Reports and discussions began surfacing around how user data was being handled on Moltbook. Questions were raised about what data the platform collects, how AI models process conversations, and whether sufficient safeguards are in place to protect sensitive information.

Cybersecurity experts have flagged the broader risk associated with AI social platforms that rely heavily on user generated content. If not designed carefully, such systems can expose personal data, enable misuse, or become targets for malicious actors.

In Moltbook’s case, the lack of clear and transparent communication around data usage has amplified concerns.

Growing User Skepticism and Trust Deficit

Beyond technical security issues, trust has become a major challenge. Users have started questioning whether Moltbook is prepared to scale responsibly. Some are wary of how AI moderation works, while others are concerned about misinformation, content manipulation, or hidden biases within the system.

For a social platform, trust is foundational. Once doubts creep in, even a strong feature set struggles to retain engaged communities.

This skepticism has slowed the platform’s momentum and shifted conversations from excitement to caution.

The Broader Challenge for AI Driven Social Platforms

Moltbook’s situation highlights a larger issue facing AI based social networks. While artificial intelligence can enhance user experience, it also introduces new layers of risk. Data privacy, algorithm transparency, and accountability become far more complex when AI systems actively shape conversations.

Regulators and users alike are increasingly demanding clarity on how AI platforms operate. Without strong governance and open communication, growth driven by hype can quickly stall.

What Moltbook Needs to Do to Regain Confidence

For Moltbook to rebuild trust, experts suggest several critical steps:

Clear disclosure of data collection and usage policies

Independent security audits and public reporting

Stronger safeguards against misuse and manipulation

Transparent explanations of AI moderation systems

Active engagement with user concerns and feedback

Addressing these areas could help the platform move from viral curiosity to sustainable innovation.

A Reality Check for AI Hype Cycles

Moltbook’s rise and slowdown serve as a reminder that not every viral AI product matures into a lasting success. While innovation sparks interest, long term adoption depends on security, ethics, and trust.

As users become more informed about AI risks, platforms can no longer rely solely on novelty. Responsible design and transparency are now non negotiable.

Final Takeaway

Moltbook entered the scene with bold promises and impressive momentum. But security concerns and growing skepticism have punctured the early hype, forcing a more serious conversation about responsibility in AI driven social spaces.

The platform’s future will depend on how well it addresses these challenges. In the evolving AI landscape, trust is not optional. It is the real currency of growth.

Topics

Covering startup news, AI, technology, and business at ThePrimely. Delivering accurate, in-depth reporting on the stories that shape the future.